- Wat gebeurde er?

- Faalhazen

- Meer trouble

- Barba Streisand

- Wat zegt copilot ervan?

- ✅ Why It Was Banned

- ❗ What happened next (the “Streisand effect”)

- 📝 In short

- 1. Avoid keyword bans — focus on behavior, not vocabulary

- 2. Communicate why moderation happens — before taking action

- 3. Use community moderators, not heavy‑handed corporate filtering

- 4. Allow venting — channel it instead of fighting it

- 5. Improve the product — the best long‑term fix

- 6. Never lock the entire server unless safety demands it

Wat gebeurde er?

Internetbrand in de Discord van Mickeysoft. Ze nemen zichzelf net iets te serieus. Kun je zoveel geld en tech hebben, de geest van de mens heb je nog steeds niet onder controle.

Faalhazen

Dit wordt de faalhazenactie van 2026. Nagel aan de eigen doodskist. Ze liggen toch al onder vuur in een periode waarin de gamevloggers massaal vlogs hebben gemaakt over hun eerste overstapje, babysteps, naar Linux. Zij waren toch 30 jaar lang de meest trouwe doelgroep, Linux dat was voor nerds die niet gamen.

Meer trouble

Valve begon met SteamOS op hardware te zetten. Pewdiepie begon in april ’25, maar er zijn er nu meer. Het maakt ook geen reet meer uit tegenwoordig. Windows 12 wordt, let op mijn woorden dit is topsecret pure bluf een Windows-venster in een linux-host met zoveel mogelijk aparte containers – van LXC en LXD tot snap, flatpak en docker.

De AI van Microsoft wordt ook al maanden afgezeken vanwege de matige ‘sloppy’ (letterlijk: vies) AI die je op Windows 11 in Copilot erbij schijnt te krijgen (nooit gezien, staat uit op mijn werk). Die sloppyness heeft ze de bijnaam Microslop opgeleverd.

Barba Streisand

Ze kennen nog steeds het Barbra Streisand effect niet. De wereldberoemde, wat oudere zangeres probeerde in 2003 een foto van internet te verjagen en zoals je ziet, it strikes back. In 2003 zat Microsoft nog netjes op kantoor, – in 2026 zijn ze wel actueel met een discord en hebben ze wel warm contact met de doelgroep) die rel moeten ze gemist hebben denk ik.

Nu hebben ze dat woord gebanned van hun Discord. En daarna de hele server, want de hel breekt natuurlijk los. En het Internet staat weer in brand.

Of course, the internet rarely leaves things there. Shortly after Windows Latest posted about Copilot Discord server blocking Microslop on X, users began experimenting in the server with variations such as “Microsl0p” using a zero instead of the letter “o.” Predictably, those versions slipped past the filter. Keyword moderation has always been something of a cat-and-mouse game, and this isn’t any different.

slashdot

Kortom smullen weer.

Let op, hier gaan managers over met pensioen op hun 53e.

Wat zegt copilot ervan?

Ask AI

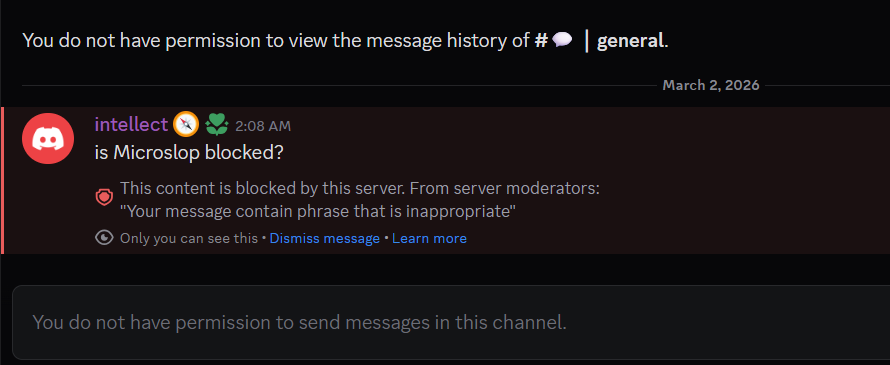

The word “Microslop” was banned on Discord — specifically in Microsoft’s official Copilot Discord server — because Microsoft’s moderation team temporarily flagged it as an “inappropriate” or “prohibited” phrase as part of an effort to control spam, harassment, and disruptive behavior in the server.

Here’s what happened, based on multiple reports:

✅ Why It Was Banned

1. Microsoft was trying to slow down spam attacks

Microsoft told reporters that the server had been targeted by spammers posting disruptive or harmful content. To slow this down, they added temporary filters for certain terms — including “Microslop.” [futurism.com]

2. The term is a popular mocking nickname for Microsoft’s AI direction

“Microslop” is widely used online as a pejorative aimed at Microsoft’s AI-heavy strategy, including Copilot’s integration across Windows 11. Moderation filters blocked the term automatically, triggering notices that it was considered inappropriate. [windowslatest.com]

3. The ban was meant to be temporary

Microsoft later said these term bans were short-lived steps while they built better moderation tools. [futurism.com]

❗ What happened next (the “Streisand effect”)

The ban backfired spectacularly:

- Users quickly began posting variations like “Microsl0p” or using different Unicode characters to bypass the filter. [windowslatest.com], [newsweek.com]

- The server became chaotic, leading Microsoft to lock it down, hide message history, and restrict access temporarily. [futurism.com]

- After backlash and widespread mockery, the term was eventually unblocked. [windowscentral.com]

📝 In short

The word “Microslop” was banned because Microsoft added keyword filters to combat spam, and the term itself had become a widespread insult targeting Microsoft’s AI decisions. The ban wasn’t permanent — but it caused enough backlash that the situation spread widely online.

If you want, I can summarize the timeline, or explain why “Microslop” became such a popular term in the first place.

Here’s a practical, non‑backfiring, Copilot‑style approach Microsoft could take to reduce harassment like the “Microslop” spam without triggering a Streisand‑effect meltdown.

No search is needed here — this is guidance, not a factual query — so here’s a clean, strategy‑focused answer.

✅ What Copilot Would Likely Recommend

A calm, transparent, non‑explosive way to reduce harassment

1. Avoid keyword bans — focus on behavior, not vocabulary

Blocking a specific word (especially a meme) almost guarantees users will escalate it.

Instead, Copilot would recommend:

- Moderating spam patterns, not phrases

- Using automation to detect mass posting, copy–paste floods, bot behavior

- Allowing harmless memes unless they cross lines (racism, threats, doxxing, etc.)

This keeps users from feeling censored while still stopping actual disruption.

2. Communicate why moderation happens — before taking action

The crisis happened mostly because Microsoft didn’t explain what they were doing.

A Copilot‑friendly approach would be:

- Post a short message in the server explaining:

- “We’re having spam attacks — we’re enabling some temporary safeguards.”

- “Legitimate criticism is welcome; we are only moderating spam behavior.”

- Give users clear expectations about what the filters aim to do.

When people understand the why, they react far more reasonably.

3. Use community moderators, not heavy‑handed corporate filtering

Users take rule enforcement much better when it comes from trusted community mods rather than corporate staff.

Copilot would suggest:

- Recruiting respected community members as volunteer moderators

- Giving them tools to warn, mute, and timeout disruptive users

- Letting the community participate in setting conduct guidelines

This builds trust and prevents the impression that Microsoft is silencing criticism.

4. Allow venting — channel it instead of fighting it

People will joke and complain. One smart strategy:

- Create a #feedback-and-rants or #memes channel

- Allow “Microslop” jokes there

- Keep main channels focused on support and discussion

When users have a designated place to blow off steam, they usually behave better elsewhere.

5. Improve the product — the best long‑term fix

The meme “Microslop” caught on because users were frustrated.

If frustrations decrease, so does harassment.

Copilot would recommend:

- A visible roadmap for improving stability, reliability, and user control

- More transparency about AI decisions

- Occasional “State of Copilot” Q&A sessions with engineers

- Publicly acknowledging real issues instead of ignoring them

When users see effort and honesty, they are far less hostile.

6. Never lock the entire server unless safety demands it

Full lockdown amplified the backlash.

A safer approach:

- Slow mode

- Temporary mutes for offending users

- Channel‑by‑channel lockdowns rather than shutting down the whole server

This limits disruption without punishing the entire community.

⭐ A One‑Sentence Summary

Focus on spam behavior, not keywords; communicate openly; empower the community; give feedback channels; and improve the product — all of which reduce harassment without igniting a backlash.

If you want, I can help you turn this into:

- a moderation policy,

- a communication plan, or

- a “best practices” doc for your own community.